A 2023 report from the International Association of Chiefs of Police indicates that 74% of law enforcement agencies face critical delays because their digital tools don’t talk to each other. You recognize the strain of managing an rtcc where operators are forced to toggle between twenty different tabs while a high-priority incident unfolds. It’s a reality where software silos from vendors like Axon provide only a partial view. This leaves your team to manually hunt for the right camera feed or license plate hit during the first sixty seconds of a call. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

In this guide, you’ll learn how to bridge the gap between fragmented data feeds and actionable situational awareness in modern law enforcement operations. We’ll examine how a unified operational intelligence layer, known as vis/ability, surfaces critical data through your video wall to eliminate human fatigue. By the end, you’ll understand how to reduce cognitive load for your dispatchers and ensure that every mission-critical detail is seen exactly when it matters most.

Key Takeaways

- Identify the operational gap where fragmented data feeds and proprietary silos lead to missed incidents and delayed response times.

- Understand how vis/ability functions as the essential operational intelligence layer that decides what appears on your screens and escalates critical information automatically.

- Learn to build a proactive rtcc that moves beyond simple video monitoring to create a unified, actionable hub for public safety.

- Discover why partial solutions and vendor lock-in create mission-critical vulnerabilities and how a central hub resolves these integration challenges.

- Master a practical framework to detect, escalate, and visualize events across your video wall, mobile devices, and remote operational centers.

The Operational Gap in Modern Public Safety

A Real-Time Crime Center, or rtcc, functions as an intelligence hub where raw data transforms into actionable intelligence. Public safety agencies face a critical disconnect between the massive volume of incoming data and the ability to act on it. This operational gap creates an environment where critical incidents slip through the cracks. In mission-critical environments, the gap is the space between data collection and human action. When seconds determine the outcome of an emergency, agencies cannot afford a delay in this transition. A 2023 industry analysis revealed that 70 percent of agencies struggle with data integration, leading to reactive rather than proactive responses.

Fragmented Systems and Data Silos

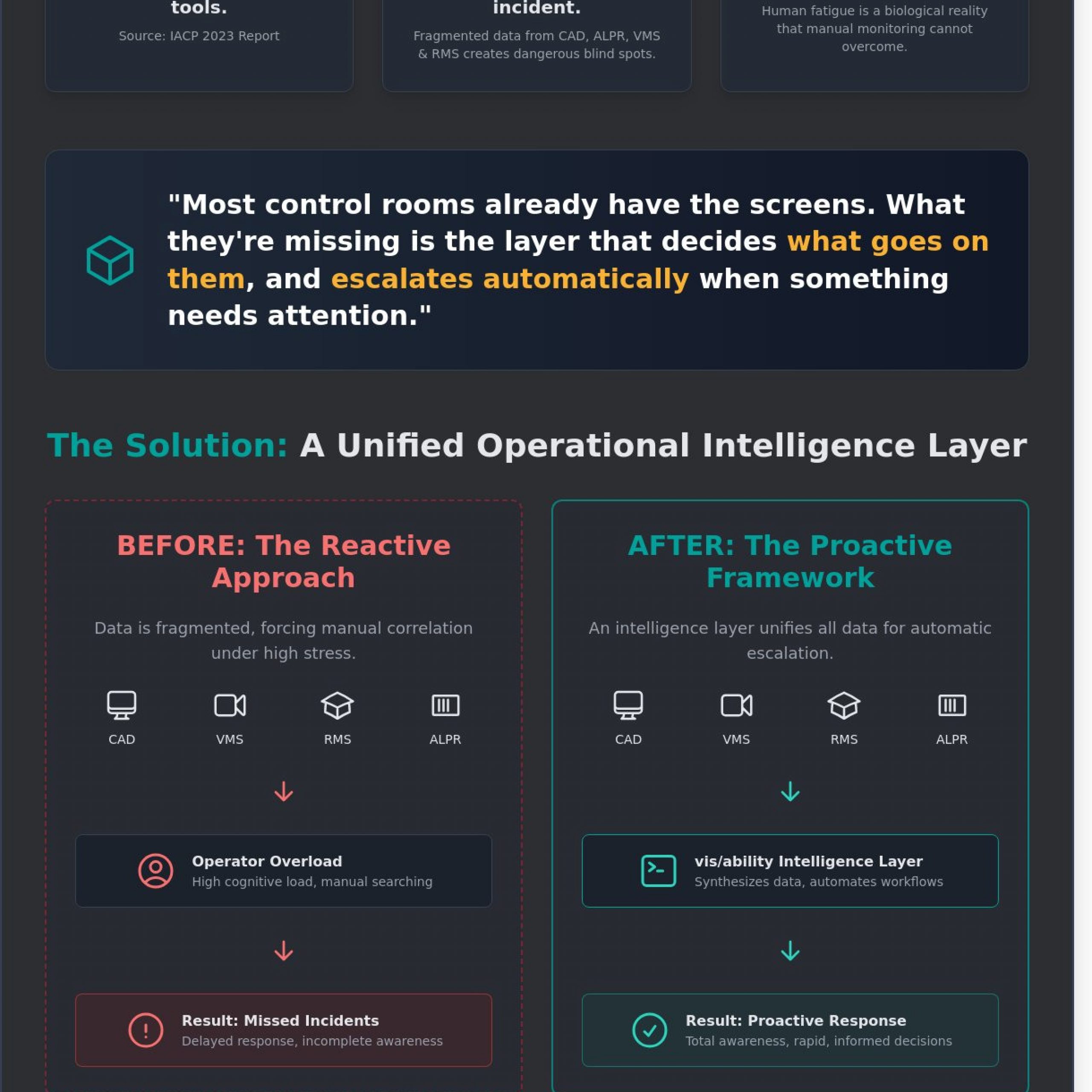

Modern dispatch centers struggle with how to manage multiple data feeds dispatch center environments. Disparate software feeds, including Computer-Aided Dispatch (CAD), Records Management Systems (RMS), and Automated License Plate Recognition (ALPR), often create dangerous blind spots. While organizations may use platforms like Axon, these tools remain partial solutions because they lack a unifying visibility layer. Manual data correlation during a high-stress incident forces operators to toggle between tabs and screens, wasting precious time. This fragmentation prevents a common operating picture and leaves commanders guessing about the total tactical situation. For more on unifying these disparate feeds, visit our public safety solutions page.

Why Operators Miss Incidents on the Video Wall

The belief that more screens equals more safety is a persistent myth. In a 24/7 mission-critical environment, operator fatigue is a biological reality that technology must account for. Studies in human factors engineering show that after only 20 minutes of monitoring a video wall, an operator’s ability to detect a specific event drops by 50 percent. This creates significant control room situational awareness problems. Situational awareness fails when systems don’t signal priority, leaving operators to hunt for anomalies in a sea of static video. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

This is precisely why operators miss incidents video wall setups that rely solely on human observation. To solve this, agencies require vis/ability, an operational intelligence layer that surfaces through the video wall only the most critical information. By providing visibility into what matters, vis/ability acts as the bridge between raw data and human judgment. It ensures that when a high-priority alert triggers, the relevant data, maps, and video feeds appear instantly for the entire team. This shift from manual monitoring to automatic escalation transforms the rtcc from a passive room of monitors into a proactive engine for public safety.

Defining the RTCC: Beyond the Video Wall

Public safety agencies often operate within a landscape of fragmented systems and data silos. Dispatchers and analysts frequently jump between 12 or more different software applications to piece together a single incident. This manual process creates significant control room situational awareness problems, as critical details are often lost in the transition between windows. Without a mechanism for automatic escalation, the risk of a delayed response increases. It’s a primary reason why operators miss incidents video wall displays are meant to highlight. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

While some organizations use standard VMS tools or platforms like Axon, these are only a partial solution. They capture data but don’t synthesize it for the whole team. Without a unifying hub, critical alerts stay buried in a single operator’s tab. Managing the EOC common operating picture solutions requires a more sophisticated approach to how to manage multiple data feeds dispatch center teams rely on daily.

vis/ability as an Operational Intelligence Layer

The vis/ability Platform functions as the operational intelligence layer that sits above your existing software. It’s the bridge between raw data and human judgment, designed to filter the noise that often paralyzes decision-making. Instead of forcing staff to hunt for relevant information, the platform surfaces what matters most based on pre-defined triggers. This ensures that the entire team sees the same reality at the same time, turning a chaotic stream of data into a structured flow of intelligence. A 2023 analysis of urban real-time centers suggests that operators often manage over 500 camera feeds simultaneously; vis/ability ensures they only focus on the ones requiring immediate action.

The Video Wall as a Decision Tool

Transforming the video wall into a dynamic asset for Public Safety requires moving past the concept of a static monitor. The Bureau of Justice Assistance defines the mission of an RTCC as the delivery of real-time technical support to enhance officer safety and efficiency. To meet this mission, the video wall must act as a canvas for surfaced intelligence rather than a simple display of camera grids.

Event-driven visualization changes the workflow of a shift commander by automatically pushing relevant maps, gunshot detection alerts, or LPR data to the wall the moment an incident occurs. This level of visibility doesn’t stop at the command center. The platform extends this critical intelligence to mobile devices in the field, ensuring units have the same clarity as headquarters. Effective rtcc operations depend on this seamless flow of information from the hub to the street. If you’re ready to see how this intelligence layer fits into your existing workflow, connect with our specialists.

The Limitations of Partial Solutions and Proprietary Silos

Public safety agencies often fall into the trap of building an rtcc around a single vendor’s ecosystem. While platforms like Axon or Genetec provide specific utility, they frequently function as proprietary silos. This fragmentation creates a dangerous gap in situational awareness. When data stays trapped within individual applications, operators must manually toggle between screens to piece together a coherent story. This manual process introduces delay and increases the probability of human error during high-stakes incidents. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

Relying on a “single pane of glass” that only supports one manufacturer’s hardware leads to vendor lock-in. This limits an agency’s ability to evolve as new technologies emerge. A 2023 report by the Police Executive Research Forum highlighted that nearly 85% of agencies struggle with data interoperability across different digital platforms. Without a vendor-agnostic intelligence layer, the video wall becomes a passive display of disconnected feeds rather than a proactive tool for response. True operational clarity only happens when the answer appears on the wall exactly when it’s needed, driven by automated logic rather than manual searching.

Addressing the Axon Shortcoming

Axon has revolutionized evidence management and body-worn camera technology. It’s an essential tool for post-incident review and digital evidence storage. However, an evidence platform isn’t an operational intelligence platform. Axon’s ecosystem is designed primarily for documentation and storage, not for the real-time orchestration of a complex rtcc environment. For a command center team to be effective, they need an integration layer that pulls data out of these silos and makes it actionable for every stakeholder in the room. Without this, body-cam footage remains a localized data point instead of a synchronized element of a broader tactical response.

The Need for a Unified Hub

Effective Mission Critical Operations require resilience that transcends vendor boundaries. vis/ability serves as this unifying hub, filling the critical gaps left by standard VMS or CAD software. It functions as an operational intelligence layer that surfaces through the video wall, ensuring that disparate data feeds, from license plate readers to gunshot detection sensors, flow into a single, coherent stream of intelligence.

- Integrates Cybersecurity Common Operating Pictures to monitor network health and prevent outages.

- Automates incident escalation, reducing the cognitive load on dispatchers and analysts.

- Ensures cross-platform data is visible across mobile devices, huddle rooms, and the main command floor.

This approach turns the public safety center into a proactive engine. By prioritizing information based on the severity of the event, vis/ability ensures that operators don’t miss incidents buried in a sea of irrelevant video feeds. It’s the bridge between raw, fragmented data and the decisive action required to protect the community.

Engineering Situational Awareness: A Practical Framework

Modern public safety operations often struggle with fragmented systems and data silos that force operators to toggle between 15 different browser tabs. This disjointed workflow is the primary reason why operators miss incidents on the video wall. When critical data is trapped in separate applications, the response is inherently reactive. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention. We define this as vis/ability, an operational intelligence layer that surfaces through the video wall to provide immediate clarity.

Managing how to manage multiple data feeds in a dispatch center requires more than just more monitors. It demands a system that unifies CAD, GIS, and real-time video into a single stream. A 2022 industry study found that 60% of critical errors in command centers stem from cognitive overload caused by excessive manual data manipulation. By implementing vis/ability, an rtcc can reduce the number of clicks required to see an incident from twelve down to zero. This framework ensures that the human element remains focused on judgment rather than navigation.

Automating the Escalation Workflow

Effective situational awareness follows a linear progression: Detect, Escalate, and Visualize. When sensors like ShotSpotter or ALPR triggers occur, the platform must bypass manual intervention. The system automatically populates the video wall with the relevant camera feeds and suspect data. This transition from reactive to proactive monitoring allows supervisors to see the threat before the first 911 call is even processed. While tools like Axon provide essential evidence, they often act as partial solutions that remain siloed. Vis/ability integrates these tools into a unified hub, ensuring the right data reaches the right person at the exact moment of impact.

Design for Decision Making

The physical environment must support the digital workflow. Professional Control Room Design Services ensure that the layout facilitates collaboration across distributed teams. Whether in a main rtcc, a huddle room, or an EOC, the Common Operating Picture (COP) must remain consistent. Mobile vis/ability extends this intelligence to field units, allowing officers to see exactly what the commander sees on their handheld devices. This scalability eliminates control room situational awareness problems by providing EOC common operating picture solutions that work across any hardware. By focusing on layout and automated visualization, agencies solve the core issues of why operators miss incidents on the video wall.

Implementing Your RTCC with Activu vis/ability

Building a modern rtcc involves more than mounting monitors and connecting camera feeds. Most agencies struggle with fragmented systems and data silos that prevent a rapid response. Dispatchers and analysts often face control room situational awareness problems when they must manually toggle between dozens of disconnected applications. This fragmentation creates a dangerous gap where critical alerts are buried under a mountain of noise. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

Activu vis/ability serves as this essential operational intelligence layer that surfaces through the video wall. It acts as the central nervous system for public safety operations, ensuring that the right information reaches the right person at the precise moment it matters. Instead of forcing operators to stare at hundreds of static camera feeds, vis/ability uses event-driven logic to highlight incidents. This transition from a reactive monitor wall to a proactive intelligence center reduces the cognitive load on staff and eliminates the primary reasons why operators miss incidents video wall displays should have caught.

A Unified Hub for Public Safety

The vis/ability operational layer transforms how agencies manage high-stakes environments. While tools like Axon provide critical evidence and field visibility, they often function as isolated islands of data. They are only partial solutions. Activu integrates these streams into a single, cohesive environment. It is the bedrock for infrastructure-critical decisions, providing a reliable common operating picture that spans across command centers, huddle rooms, and mobile devices. This solves the most common EOC common operating picture solutions gaps by ensuring every stakeholder sees the same validated data. To start designing your facility, you can Contact Us for a custom blueprint tailored to your jurisdictional needs.

Ensuring Long-Term Operational Continuity

Operational resilience requires a shift away from proprietary, closed-loop hardware. Agencies that lock themselves into specific hardware vendors often face “end-of-life” crises that render their centers obsolete within five years. Activu utilizes a COTS-based (Commercial Off-The-Shelf) approach to ensure long-term continuity. This vendor-agnostic methodology allows your rtcc to evolve as new sensors, gunshot detection systems, or AI analytics become available.

Our software-defined architecture addresses the technical hurdles of how to manage multiple data feeds dispatch center teams deal with daily. By focusing on software integration rather than hardware dependency, we provide a path for future-proofing your investment. Activu has been a trusted partner in mission-critical environments since 1983, delivering the technical reliability required when lives are on the line. We provide the steady reassurance that your technology will perform under pressure, turning potential chaos into actionable intelligence.

Modernize Your Command Center for Faster Response

Effective public safety isn’t achieved by adding more monitors; it’s achieved by bridging the gap between fragmented data and actionable intelligence. While solutions like Axon or Genetec offer valuable inputs, they often remain siloed, leaving operators to struggle with data overload during high-stakes incidents. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention. By implementing vis/ability, you integrate these disparate feeds into a unified framework that prioritizes the most critical events.

Top-tier law enforcement and federal agencies use this technology to reduce incident response times through automated escalation. This operational intelligence layer ensures that your rtcc functions as a proactive force multiplier rather than a passive observation deck. Whether in a central command center or on a mobile device, your team gains the clarity required to protect the community with absolute certainty. Don’t let your legacy systems dictate your response speed.

Request a demo of the vis/ability platform for your RTCC today to see how we provide the visibility that matters most. Your mission deserves the highest standard of technical reliability.

Frequently Asked Questions

What is a Real-Time Crime Center (RTCC)?

An RTCC is a mission-critical command center that integrates diverse data streams to provide law enforcement with a unified operational picture. Many agencies currently operate with siloed information, where 70 percent of critical data remains trapped in isolated systems. A modern rtcc closes this gap by centralizing video feeds, license plate readers, and gunshot detection into a single interface. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

How does an RTCC improve officer and community safety?

An rtcc improves safety by delivering precise, real-time intelligence to officers before they arrive on a scene. When dispatchers see what is happening via vis/ability, they can warn units about weapons or hazards that 911 callers might miss. This proactive approach reduces response times by an average of 15 percent according to Department of Justice studies. It transforms the video wall from a passive display into an active tool for rapid intervention, ensuring that field units aren’t walking into unknown threats.

What is the difference between an RTCC and a standard dispatch center?

Standard dispatch centers focus on call intake and unit assignment, while an rtcc provides deep analytical support for active incidents. Most dispatch environments suffer from fragmented systems where operators must toggle between 5 or 6 different applications. The rtcc uses vis/ability to unify these sources, ensuring that the most relevant data surfaces automatically during high-stress events. This intelligence layer allows the team to focus on tactical decisions rather than managing software interfaces.

Can an RTCC integrate with my existing camera and CAD systems?

Yes, an rtcc is designed to act as a central hub for all existing technology, including CAD and camera networks. While platforms like Axon provide valuable data, they’re only a partial solution because they often remain siloed from other critical feeds. Vis/ability integrates these disparate tools into a single common operating picture. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention.

What are the most common situational awareness problems in control rooms?

The most common control room situational awareness problems include data overload and the “wall of glass” fatigue where operators miss incidents on the video wall. Research shows that after 20 minutes of monitoring, operators can miss up to 95 percent of significant activity on a screen. This gap exists because systems don’t prioritize information. Vis/ability solves this by filtering noise and surfacing only the critical alerts that require immediate human judgment, preventing vital details from being lost in the chaos.

How much does it cost to build a Real-Time Crime Center?

The cost of building an rtcc varies based on the scale of integration and the number of existing assets leveraged. According to 2023 Bureau of Justice Assistance grant data, initial investments for mid-sized cities often range from 500,000 to over 2 million dollars. Agencies can reduce these costs by utilizing vis/ability to connect existing hardware rather than replacing it. This approach maximizes previous investments while adding the essential intelligence layer needed for proactive public safety without requiring a total infrastructure overhaul.

How do I manage multiple data feeds without overwhelming operators?

Knowing how to manage multiple data feeds in a dispatch center requires an automated intelligence layer that prioritizes information based on incident severity. Operators shouldn’t manually search through 100 camera feeds during an emergency. Vis/ability manages this by automatically pushing relevant video and data to the video wall when a CAD trigger occurs. This ensures that the team sees exactly what matters without being buried under a mountain of irrelevant data, allowing for faster and more accurate decision making.

What is the role of vis/ability in a public safety command center?

Vis/ability serves as the operational intelligence layer that surfaces critical information through the video wall and mobile devices. It acts as the bridge between raw data and human decision-making, ensuring that silos don’t prevent life-saving actions. Most control rooms already have the screens. What they’re missing is the layer that decides what goes on them, and escalates automatically when something needs attention. It empowers the entire team to act with absolute certainty during mission-critical events.